Multinomial test

In statistics, the multinomial test is the test of the null hypothesis that the parameters of a multinomial distribution equal specified values. It is used for categorical data; see Read and Cressie[1].

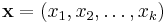

We begin with a sample of  items each of which has been observed to fall into one of

items each of which has been observed to fall into one of  categories. We can define

categories. We can define  as the observed numbers of items in each cell. Hence

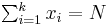

as the observed numbers of items in each cell. Hence  .

.

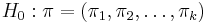

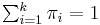

Next, we define a vector of parameters  , where :

, where : . These are the parameter values under the null hypothesis.

. These are the parameter values under the null hypothesis.

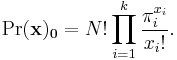

The exact probability of the observed configuration  under the null hypothesis is given by

under the null hypothesis is given by

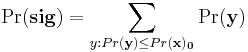

The significance probability for the test is the probability of occurrence of the data set observed, or of a data set less likely than that observed, if the null hypothesis is true. Using an exact test, this is calculated as

where the sum ranges over all outcomes as likely as, or less likely than, that observed. In practice this becomes computationally onerous as  and

and  increase so it is probably only worth using exact tests for small samples. For larger samples, asymptotic approximations are accurate enough and easier to calculate.

increase so it is probably only worth using exact tests for small samples. For larger samples, asymptotic approximations are accurate enough and easier to calculate.

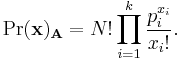

One of these approximations is the likelihood ratio. We set up an alternative hypothesis under which each value  is replaced by its maximum likelihood estimate

is replaced by its maximum likelihood estimate  . The exact probability of the observed configuration

. The exact probability of the observed configuration  under the alternative hypothesis is given by

under the alternative hypothesis is given by

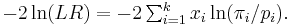

The natural logarithm of the ratio between these two probabilities multiplied by  is then the statistic for the likelihood ratio test

is then the statistic for the likelihood ratio test

If the null hypothesis is true, then as  increases, the distribution of

increases, the distribution of  converges to that of chi-squared with

converges to that of chi-squared with  degrees of freedom. However it has long been known (e.g. Lawley 1956) that for finite sample sizes, the moments of

degrees of freedom. However it has long been known (e.g. Lawley 1956) that for finite sample sizes, the moments of  are greater than those of chi-squared, thus inflating the probability of type I errors (false positives). The difference between the moments of chi-squared and those of the test statistic are a function of

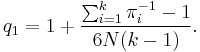

are greater than those of chi-squared, thus inflating the probability of type I errors (false positives). The difference between the moments of chi-squared and those of the test statistic are a function of  . Williams (1976) showed that the first moment can be matched as far as

. Williams (1976) showed that the first moment can be matched as far as  if the test statistic is divided by a factor given by

if the test statistic is divided by a factor given by

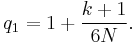

In the special case where the null hypothesis is that all the values  are equal to

are equal to  (i.e. it stipulates a uniform distribution), this simplifies to

(i.e. it stipulates a uniform distribution), this simplifies to

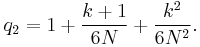

Subsequently, Smith et al. (1981) derived a dividing factor which matches the first moment as far as  . For the case of equal values of

. For the case of equal values of  , this factor is

, this factor is

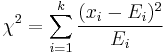

The null hypothesis can also be tested by using Pearson's chi-squared test

where  is the expected number of cases in category

is the expected number of cases in category  under the null hypothesis. This statistic also converges to a chi-squared distribution with

under the null hypothesis. This statistic also converges to a chi-squared distribution with  degrees of freedom when the null hypothesis is true but does so from below, as it were, rather than from above as

degrees of freedom when the null hypothesis is true but does so from below, as it were, rather than from above as  does, so may be preferable to the uncorrected version of

does, so may be preferable to the uncorrected version of  for small samples.

for small samples.

References

- ^ Read, T. R. C. and Cressie, N. A. C. (1988). Goodness-of-fit statistics for discrete multivariate data. New York: Springer-Verlag. ISBN 0-387-96682-X.

- Lawley, D. N. (1956). "A General Method of Approximating to the Distribution of Likelihood Ratio Criteria". Biometrika 43: 295–303.

- Smith, P. J., Rae, D. S., Manderscheid, R. W. and Silbergeld, S. (1981). "Approximating the Moments and Distribution of the Likelihood Ratio Statistic for Multinomial Goodness of Fit". Journal of the American Statistical Association (American Statistical Association) 76 (375): 737–740. doi:10.2307/2287541. JSTOR 2287541.

- Williams, D. A. (1976). "Improved Likelihood Ratio Tests for Complete Contingency Tables". Biometrika 63: 33–37. doi:10.1093/biomet/63.1.33.